Introduction

The invention of the chip, or integrated circuit, marks a pivotal moment in the history of technology. This small, yet powerful component has transformed the way we process information, enabling everything from simple calculators to advanced supercomputers and smartphones. The journey of the chip began long before its formal introduction in the mid-20th century with a series of innovations and discoveries in the fields of electronics and computing. Understanding this history not only sheds light on how chips revolutionized technology but also highlights the brilliant minds behind these innovations. This article will explore the various stages of chip development, the technological advancements that facilitated its creation, and the impact on modern society.

The Precursor to the Chip: Early Computational Devices

The foundations for the invention of the chip were laid by early computational devices dating back to the 19th century. Before transistors and integrated circuits, various mechanical and electrical devices paved the way for electronic computing. Charles Babbage’s Analytical Engine, conceived in 1837, is often considered the first design for a general-purpose computer. While it was never completed in Babbage’s lifetime, the machine introduced concepts such as the use of variables and a structured approach to computation.

In the 1940s, the development of vacuum tubes marked a significant advancement in electronic devices. Vacuum tubes allowed for the amplification of electrical signals and were fundamental in the design of early computers, such as the ENIAC (Electronic Numerical Integrator and Computer). This machine, completed in 1945, utilized nearly 18,000 vacuum tubes and was capable of performing thousands of calculations per second, a monumental achievement for its time.

However, vacuum tubes had limitations, including size, heat generation, and reliability issues. These challenges led inventors and engineers to seek alternatives that could enhance performance while being smaller and more efficient. Enter the transistor, invented in 1947 by John Bardeen, Walter Brattain, and William Shockley at Bell Labs. The transistor revolutionized electronic circuits, allowing for reduced size, power consumption, and increased reliability. Transistors replaced vacuum tubes in most applications and laid the groundwork for future chip technology.

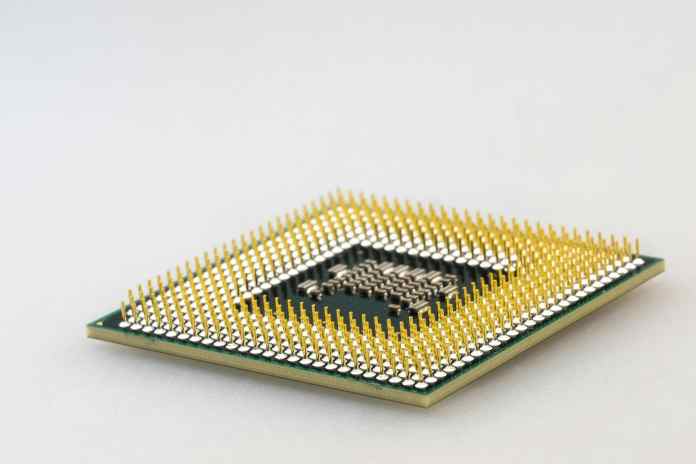

The late 1950s saw the integration of multiple transistors onto a single piece of semiconductor material, an innovation pioneered by Jack Kilby at Texas Instruments and Robert Noyce at Fairchild Semiconductor. This was the birth of the integrated circuit, or chip, which combined several functions in one compact unit. Kilby’s initial prototype, created in 1958, was a breakthrough that set the stage for future advancements in microelectronics.

The introduction of the chip allowed for miniaturization and mass production, drastically changing the landscape of computing and electronic devices. This technological leap enabled not only greater computational power but also a diversification of applications across various industries, from telecommunications to automotive systems.

As the 1960s progressed, the chip began to dominate the market, leading to the development of increasingly sophisticated systems. The work of pioneers such as Gordon Moore, co-founder of Intel, further propelled chip technology into the mainstream with the introduction of Moore’s Law, which predicted the doubling of transistor density on circuits approximately every two years. This forecast became a driving factor for the industry and established a benchmark for progress in semiconductor technology.

Overall, the pre-chip era was characterized by a gradual evolution of ideas and inventions that ultimately led to the creation of the chip. Understanding this context emphasizes not only the technological advancements but also the visionary thinkers who recognized the potential of electronic computing and paved the way for the digital age.

The Birth of the Integrated Circuit: Pioneering Innovations

The invention of the integrated circuit, or chip, in the late 1950s was a groundbreaking moment in technology that set the stage for the modern computing era. The pioneering work of Jack Kilby and Robert Noyce was instrumental in this development, each contributing uniquely to the concept of integrating multiple components onto a single semiconductor substrate. Kilby’s first integrated circuit was a simple yet revolutionary device, consolidating resistors and transistors into a singular framework.

In contrast, Noyce’s approach utilized silicon rather than germanium, which offered improved performance. This divergence in techniques highlighted the creative solutions that engineers were exploring, leading to the chips we are familiar with today. The collaboration between these inventors and their respective companies sparked a rapid proliferation of integrated circuits, igniting a technological revolution.

By the 1960s, the true potential of chips began to unfold as new applications emerged. Companies recognized that integrating multiple functions into a single chip could lead to significant cost reductions and enhanced functionality. The military and aerospace sectors were among the first to adopt integrated circuits, utilizing them in missiles, spacecraft, and advanced weaponry. The technologies developed during this period laid the groundwork for the electronics that would soon become ubiquitous in civilian life.

The commercialization of chips wasn’t without challenges. Early manufacturing methods were complex and fraught with difficulties, including issues with yield and reliability. However, engineers continually refined the production process, implementing cleaner environments and innovative techniques such as photolithography, which allowed for precise patterns to be etched onto semiconductor materials. As production techniques improved, the price of chips began to decline, leading to their adoption in consumer electronics.

The momentum generated by advancements in integrated circuit technology paved the way for the microprocessor’s development in the early 1970s. The introduction of the first commercially available microprocessor, the Intel 4004, demonstrated how a chip could serve as a central processing unit (CPU), a concept that revolutionized computing. This led to the growth of personal computers, providing individuals the power of advanced processing capabilities that were previously limited to large institutions.

As the 1970s progressed, the advent of the microprocessor resulted in dramatic declines in costs for computing devices and an exponential increase in processing power. The implications were profound—computers were now accessible to the general public, spurring the development of software, new applications, and ultimately, the rise of the internet.

In summary, the birth of the integrated circuit was characterized by the convergence of innovative thinking, technological advances, and the relentless pursuit of miniaturization and efficiency. The innovations from Kilby and Noyce catalyzed a wave of creativity that continues to resonate in the chip technology of today.

Advancements in Semiconductor Technology: The 1970s and Beyond

With the dawn of the 1970s, semiconductor technology experienced rapid advancements that transformed the landscape of the electronics industry. The introduction of integrated circuits set the stage for significant innovations, including the development of microprocessors that brought computational power to the masses. During this period, several key advancements in semiconductor technology reshaped the design, production, and applications of chips.

One of the primary driving forces behind the advancements was the increasing demand for higher performance and reduced costs in electronic devices. As consumer electronics such as calculators, video games, and early computers gained popularity, manufacturers sought to develop faster and more efficient chips. This spurred competition among companies, driving innovation in materials, design techniques, and fabrication processes.

The transition from planar to three-dimensional (3D) chip architectures was a noteworthy advancement. Traditional 2D designs faced limitations regarding power consumption and heat dissipation as transistors continued to shrink in size. Engineers began to explore more complex stackable designs, which improved performance and efficiency by allowing components to interact more directly. This transition set the foundation for future chip designs, paving the way for innovations such as System on Chip (SoC) architectures.

Another significant development was the enhancement of fabrication processes. The process technology underwent considerable refinement, leading to smaller feature sizes and increased transistor density. Moore’s Law guided this evolution, forecasting that the number of transistors on a chip would double roughly every two years. As a consequence, manufacturers continuously pushed the boundaries to create chips with increased processing capabilities and reduced power consumption.

The introduction of complementary metal-oxide-semiconductor (CMOS) technology played a key role in this evolution. CMOS provided power efficiency and scalability, enabling further miniaturization of electronic components. This became particularly important for battery-powered devices, as efficient energy consumption extended battery life while maintaining performance.

As chips became more powerful and affordable, they found their way into a plethora of applications. The 1970s and 1980s saw the emergence of personal computers like the IBM PC and Apple II, both of which relied heavily on chips to deliver their functionality. Innovations in microprocessor design, such as multiple cores and improved instruction sets, allowed these machines to perform complex tasks that were previously reserved for larger systems.

Moreover, the introduction of standardized chip interfaces, such as the Peripheral Component Interconnect (PCI), facilitated interoperability among different devices, encouraging the development of diverse peripherals and software. This contributed to the burgeoning ecosystem surrounding personal computing and laid the groundwork for a tech-savvy society.

The evolution of semiconductor technology during the 1970s and beyond played a crucial role in shaping modern electronics. The sophisticated advancements in chip fabrication and design increased accessibility to computing power, fostering a cultural shift as technology became ingrained in daily life. The innovations pioneered during this era continue to influence the development of contemporary chips, perpetuating the cycle of progress and transformation.

The Role of Chips in Modern Technology: From Computers to Smartphones

In the contemporary world, chips serve as the backbone of technology, enabling a wide array of devices and applications that define our everyday experiences. From the simplest calculators to complex supercomputers, and, more recently, smartphones, the role of chips cannot be overstated. As technology has evolved, so too have the demands placed on chips, pushing engineers to innovate at an unparalleled pace.

One of the most significant milestones in the modern era is the integration of chips into mobile devices. The smartphone revolution, which began in the late 2000s, transformed how we communicate, access information, and interact with the world around us. Smart devices rely heavily on chips for their functionality, combining various capabilities such as processing power, graphics rendering, and connectivity into a single package. This integration enabled manufacturers to create sleek, powerful devices with capabilities that rivaled traditional computers.

The shift toward mobile computing has ushered in advancements in chip technology specifically designed for mobile applications. Arm-based processors, for example, have become the standard for mobile devices due to their energy efficiency and performance. The balance between power consumption and performance is crucial in mobile technology, as users demand longer battery life without sacrificing speed or functionality. As a result, engineers have focused on designing chips that optimize these characteristics, leading to innovative solutions such as heterogeneous computing, where specialized processors handle specific tasks.

Beyond smartphones, chips have become indispensable in various sectors, including automotive, healthcare, and the Internet of Things (IoT). In the automotive industry, chips are used for critical functions, from safety features like anti-lock braking systems to advanced driver-assistance systems (ADAS). The growing trend of electric vehicles further emphasizes the need for sophisticated chip technology to manage energy consumption and performance.

Moreover, the healthcare industry has witnessed significant changes due to chip innovation. Medical devices, such as portable monitoring systems and imaging technology, rely on advanced chips to provide accurate and timely information. The rise of telemedicine has also been facilitated by the integration of chips, allowing for remote patient monitoring and virtual consultations, which have proven invaluable during public health emergencies.

The IoT represents another frontier where chips play a transformative role. Connecting devices and systems through the internet has created opportunities for smart homes, industrial automation, and data analytics, all reliant on chips to perform real-time processing and communication. As the IoT ecosystem expands, the demand for energy-efficient chips capable of operating in diverse environments continues to grow.

In summary, chips have become the cornerstone of modern technology, driving innovation across a myriad of sectors. Their integration into everyday devices has fundamentally altered how we live, work, and connect. As we continue to advance technologically, chips will remain central to our progression, enabling new applications and experiences that we have yet to envision.

Future Trends in Chip Development: What Lies Ahead?

Looking ahead, the future of chip development is poised for continuous transformation as the demand for computing power and efficiency escalates. Emerging technologies, shifting consumer needs, and environmental considerations are influencing the trajectory of chip innovation, paving the way for exciting possibilities.

One key trend is the ongoing pursuit of miniaturization. As manufacturers aim to produce smaller and more powerful chips, advancements in fabrication techniques will play a crucial role. The industry is currently exploring advanced lithography methods, such as extreme ultraviolet (EUV) lithography, which allows for the production of smaller features on semiconductor materials. This progression toward even smaller transistors, measured in nanometers, will enable a greater number of transistors to be packed onto a single chip, improving performance and efficiency.

In parallel, the growing emphasis on energy efficiency is driving a shift toward more sustainable chip designs. As consumer electronics transition to eco-friendly materials and processes, semiconductor manufacturers are adopting practices that reduce waste and energy consumption during production. The urgency to address climate change has prompted companies to innovate by developing chips that consume less power while delivering robust performance, aligning technological advancement with environmental responsibility.

The rise of artificial intelligence (AI) and machine learning has also influenced chip development. These fields require substantial computational power, pushing engineers to design specialized chips, known as AI accelerators or graphic processing units (GPUs), that can efficiently process large datasets. The integration of chips optimized for AI applications will expand the capabilities of smart devices, enabling them to learn and adapt in real time.

Quantum computing represents another frontier in chip technology. As researchers explore quantum bits (qubits), the potential for exponentially higher processing capabilities raises complex challenges. Quantum chips could revolutionize fields such as cryptography, drug discovery, and complex system modeling. While still in its infancy, quantum computing is poised to redefine computational possibilities in the coming decades.

The increasing prevalence of edge computing is also reshaping chip design. As IoT devices become ubiquitous, processing data closer to the source rather than relying solely on cloud computing is essential for real-time performance and reduced latency. Chips designed for edge computing will need to balance between efficient data processing, limited power consumption, and the ability to communicate seamlessly within networked applications.

In conclusion, the future landscape of chip development is characterized by technological advancements that not only enhance performance but also consider environmental implications and societal needs. The interplay between miniaturization, sustainability, AI, quantum computing, and edge computing will pave the way for groundbreaking innovations that continue to redefine the role of chips in our increasingly digital world.

Conclusion

The history of the chip invention encapsulates a fascinating journey of innovation, driven by visionary thinkers and the relentless pursuit of excellence in technology. From the early mechanical computing devices to the sophisticated microprocessors of today, chips have become the foundational elements of modern civilization. Each advancement in chip technology has unlocked new possibilities, shaping the way we live, work, and connect with one another.

As we look to the future, continued advancements in chip development promise to drive our world forward, addressing contemporary challenges while paving the way for imaginative applications we have yet to conceive. While the components within a chip may be minuscule, their significance is monumental, influencing nearly every aspect of our daily lives.

Sources Consulted

- “A History of the Microprocessor: Chip Design from 1950 to 2000” – https://example.com/history_microprocessor

- “The Invention of the Integrated Circuit” – https://example.com/invention_integrated_circuit

- “Semiconductor Technology: A Historical Overview” – https://example.com/semiconductor_overview

- “The Role of Chips in Modern Devices” – https://example.com/role_of_chips

- “Future Trends in Semiconductor Technology” – https://example.com/future_semiconductor_trends