Introduction

Intel Corporation, founded in 1968 by Robert Noyce and Gordon Moore, is one of the most influential technology companies in the world. Known primarily for its contributions to the semiconductor industry, Intel has been at the heart of the digital revolution, providing the chips that power personal computers, servers, and many modern electronic devices. From its early days developing memory products to its pivotal role in the rise of microprocessors, Intel has shaped the computing landscape. This article explores the key milestones in Intel’s history, its technological innovations, and how it continues to evolve in a rapidly changing industry.

The Founding of Intel: Vision, Early Days, and Key Founders

Intel’s inception traces back to a vision of revolutionizing the semiconductor industry, spearheaded by two of its key founders: Robert Noyce and Gordon Moore. The company’s founding in 1968 was a response to the rapidly changing needs of technology and computing, marking the beginning of what would become one of the world’s most powerful corporations in the tech world.

The Vision of Innovation

Robert Noyce, often referred to as the “Mayor of Silicon Valley,” had previously co-founded Fairchild Semiconductor. Noyce’s departure from Fairchild came after frustrations over the company’s leadership and strategy, particularly in the rapidly advancing semiconductor field. Alongside him, Gordon Moore, a chemist and engineer who had developed Moore’s Law—the observation that the number of transistors on a microchip doubles roughly every two years—had also begun to see the limitations of the semiconductor market and envisioned a company that would bring a new approach to technology. Both men were driven by the idea that the future of computing lay not in larger, slower systems but in smaller, faster, and more affordable semiconductors.

Together with Andrew Grove, a key third member who would later serve as Intel’s CEO, the trio founded Intel (a combination of “Integrated Electronics”) in Mountain View, California, in 1968. The company’s initial focus was on developing semiconductor memory products, including dynamic random-access memory (DRAM). Intel’s first major success came with the development of a 1K DRAM chip, a product that would set the stage for its future in microprocessor development.

The Role of Gordon Moore and Robert Noyce

Gordon Moore’s intellectual prowess and focus on research and development became a cornerstone of Intel’s early success. His experience as a chemist brought a deep understanding of semiconductor physics, and his insight into the industry’s potential for exponential growth drove Intel’s early innovations. Moore’s Law, a concept that he proposed in 1965, would later become a guiding principle for Intel and the tech industry as a whole. This law suggested that the number of transistors on a microchip would double approximately every two years, leading to exponential advancements in computing power and efficiency. This insight shaped Intel’s long-term strategy, pushing the company toward ever-smaller, faster, and more powerful microprocessors.

Noyce, on the other hand, brought a wealth of experience in leadership and innovation. His creation of the integrated circuit, a fundamental breakthrough that allowed multiple electronic components to be placed on a single chip, earned him recognition as one of the most important figures in semiconductor history. Noyce’s entrepreneurial spirit and his drive to build a company around groundbreaking technology played a major role in shaping Intel’s corporate culture and approach to market competition.

The Early Days: A Focus on Memory Chips

Intel’s first product, the 3101 Schottky bipolar 64-bit static RAM (SRAM) chip, was released in 1969. While not a major commercial success, the 3101 set the stage for Intel’s involvement in the semiconductor industry. By the early 1970s, Intel had moved toward producing more advanced memory chips, including the 1103 DRAM chip, which became widely adopted and helped establish the company’s credibility in the market.

However, Intel’s decision to enter the memory chip market would soon be challenged by a combination of factors: rising competition from other companies, particularly from Japanese manufacturers, and the emerging opportunity in microprocessor development. Despite the fierce competition, Intel’s early years demonstrated a commitment to innovation and excellence, values that would continue to drive the company’s growth in the decades that followed.

The 1970s also saw Intel’s shift toward a new focus—microprocessors. While the memory market was crucial to Intel’s early success, the future of computing seemed increasingly tied to the development of a new type of chip that could perform calculations and control systems. It was this realization that led to Intel’s eventual breakthrough in the 1970s, with the development of the world’s first microprocessor, the Intel 4004.

Key Challenges in the Early Years

Intel’s early years were not without challenges. The company struggled financially in its first few years of operation, and it faced difficulties in attracting customers for its products. However, the support from venture capital, the company’s commitment to quality, and a rapidly changing technological landscape helped Intel overcome these obstacles. One of the company’s key moves was securing a significant partnership with the Japanese company Busicom, which would lead to the creation of the Intel 4004 microprocessor.

Despite these early challenges, Intel’s founders remained focused on their long-term vision of transforming the semiconductor market, and this vision would soon materialize. By the mid-1970s, Intel had made a successful transition into microprocessors, a move that would set the company on a path to becoming a global leader in computing technology.

Intel’s Breakthrough with the Microprocessor: The 4004 and Beyond

Intel’s shift from memory chips to microprocessors marked a pivotal moment not only in the company’s history but in the evolution of computing itself. The development of the Intel 4004 microprocessor, released in 1971, was a breakthrough that would pave the way for personal computers, fundamentally changing how we interact with technology today. This chapter of Intel’s history is characterized by both technical innovation and strategic decisions that would shape the future of the company and the entire tech industry.

The Challenge of the Early Microprocessor Market

In the late 1960s and early 1970s, the computing industry was dominated by large, mainframe systems that required substantial space and power. These systems were often only accessible to large corporations, governments, or research institutions. Microprocessors, or “micro” integrated circuits capable of performing the basic functions of a computer, had not yet been fully developed. The market was ripe for a product that could shrink the power of computers into smaller, more efficient, and cost-effective systems.

Intel’s first significant involvement in the development of microprocessors came when the company was approached by the Japanese company Busicom. Busicom was looking for a solution to replace the complex circuitry used in its desktop calculators. Initially, Busicom wanted Intel to provide a set of custom chips for its calculators, but Intel proposed something far more revolutionary: a single chip that could replace several separate circuits and control the entire calculator. This idea would form the foundation for the Intel 4004 microprocessor.

The Intel 4004: The Birth of the Microprocessor

Released in 1971, the Intel 4004 was the world’s first commercially available microprocessor. It was a 4-bit chip capable of performing basic arithmetic and logical operations, making it the heart of early computing devices. The 4004 was a major departure from traditional computing systems, as it integrated many different functions into a single chip, reducing the need for separate components and making computers more compact and affordable.

While the 4004 was not powerful by modern standards—operating at a clock speed of only 740 kHz and with just 16 pins—it represented a monumental achievement. It was designed for use in a variety of applications, including calculators, and was capable of executing approximately 92,000 instructions per second, a significant advancement over previous computing technologies. Intel’s breakthrough was not only technical but also strategic, as it effectively opened the door to new possibilities for personal computers, calculators, and other electronic devices.

Expanding the Microprocessor Market

The success of the Intel 4004 was instrumental in sparking interest in microprocessors. It showcased the potential of integrated circuits to replace complex, bulky systems with smaller, more efficient alternatives. Intel quickly realized that there was a broader market for these types of chips and began to develop more advanced models. In 1972, Intel introduced the 8008 microprocessor, an 8-bit chip that offered greater processing power and versatility. It was followed by the 8080 in 1974, which became one of the most widely used microprocessors in early personal computers.

The Intel 8080 was the first 8-bit microprocessor to achieve mass adoption, and it was used in several important early computing devices, including the Altair 8800. The Altair 8800, released in 1975, is widely regarded as one of the first personal computers, and it played a key role in launching the personal computing revolution. The 8080’s success helped solidify Intel’s position as the leading producer of microprocessors, and it paved the way for future innovations in the company’s product line.

The Advent of the Personal Computer

Intel’s advancements in microprocessor technology coincided with the rise of personal computers in the 1970s and 1980s. The development of the Intel 4004, followed by the 8008 and 8080, gave rise to the age of the personal computer, as these microprocessors were the brains behind the machines that would soon become household staples. The microprocessor revolutionized computing by enabling devices that were smaller, cheaper, and more accessible than ever before.

In 1981, Intel introduced the 8086 microprocessor, a 16-bit chip that would form the basis for the first IBM personal computer. The 8086’s architecture, known as x86, became the foundation for the PC industry, with Intel continuing to produce increasingly powerful processors throughout the 1980s and 1990s. The widespread adoption of personal computers in homes and offices around the world was directly tied to the development of Intel’s microprocessors, which drove the success of companies like IBM, Dell, and Hewlett-Packard, among others.

Intel’s Strategic Decisions

The decision to focus on microprocessors and move away from its initial memory chip business proved to be a prescient one. Intel’s early successes in microprocessor development were followed by a series of strategic decisions that would ensure its dominance in the semiconductor industry. By investing heavily in research and development and focusing on creating processors that could power personal computers, Intel set itself apart from competitors and cemented its position as a leader in the field.

Intel’s aggressive approach to innovation also led the company to enter the lucrative market for microprocessors in servers, workstations, and embedded systems, diversifying its product offerings and further solidifying its market dominance. Throughout the 1980s and 1990s, Intel’s consistent improvement of its microprocessors allowed the company to stay ahead of the competition and maintain its leadership role in the rapidly evolving technology sector.

A Legacy of Innovation

The impact of the Intel 4004 and its successors cannot be overstated. These chips were the foundation of the personal computer revolution and helped lay the groundwork for the broader technology industry we know today. They made it possible to build more powerful and compact devices, from desktop computers to laptops, smartphones, and other consumer electronics. Intel’s microprocessors became the standard in computing, and the company continued to push the boundaries of what was possible with each new generation of chips.

The legacy of the Intel 4004 microprocessor is not just one of technological achievement, but also one of entrepreneurial vision. It was the realization of a dream to create a chip that could change the world—and it did. Intel’s success in the microprocessor market was a key driver of the information age, and it set the stage for the development of the digital economy, opening up new possibilities for businesses, consumers, and innovators alike.

The Rise of Personal Computing and Intel’s Role

The 1970s and 1980s were transformative decades for both Intel and the computing industry as a whole. While the early history of computers was dominated by large, centralized mainframes, the advent of personal computing heralded a new era—one in which computers were no longer confined to corporate offices or research labs but became accessible to individuals and small businesses. Intel’s microprocessors played a crucial role in this revolution, providing the processing power necessary to make personal computers affordable, powerful, and widespread. Intel’s involvement in this market would not only reshape the company’s future but also the future of computing itself.

The Launch of the IBM Personal Computer and Intel’s Partnership

Intel’s pivotal moment in the rise of personal computing came in 1981 with the launch of the IBM Personal Computer (PC). IBM, a leader in mainframe and business computing, had initially intended to develop its own proprietary processor for its new personal computer. However, recognizing the need for a more versatile and powerful solution, IBM turned to Intel for its expertise in microprocessors. Intel’s 16-bit 8088 microprocessor was selected as the heart of the IBM PC, marking the beginning of a long and profitable partnership between the two companies.

The release of the IBM PC was a game-changer for the personal computing market. The machine offered a level of performance and capability that was previously unseen in home computing systems. It also set a standard for what personal computers should look like and how they should function. The inclusion of Intel’s 8088 processor in the IBM PC made the chip synonymous with the burgeoning personal computer market, and as IBM’s PC became a commercial success, so too did Intel’s microprocessor.

This collaboration laid the groundwork for Intel’s dominance in the personal computer market. The success of the IBM PC, powered by Intel’s 8088 chip, helped Intel cement its position as the leading producer of microprocessors for personal computers. In fact, the x86 architecture introduced with the 8088 processor would go on to become the standard for PC processors, with virtually all personal computers using Intel chips for decades to come.

Intel’s Role in Enabling the PC Revolution

Intel’s microprocessors made personal computing possible on a mass scale. Prior to the availability of affordable microprocessors, computers were large, costly, and largely impractical for the average consumer or small business. The invention of the microprocessor changed all of that by combining the essential components of a computer—the CPU, memory, and I/O controllers—into a single integrated circuit. This innovation not only reduced the size of computers but also lowered their cost, opening the door for a new generation of machines that could be used by individuals at home and small businesses around the world.

By providing powerful yet affordable processors, Intel played a pivotal role in accelerating the personal computer revolution. With its increasing market share, Intel became the go-to supplier for companies like Compaq, Dell, and Hewlett-Packard, all of whom built personal computers that relied on Intel chips. As the industry grew, Intel’s dominance in the processor market expanded, with the company’s chips powering everything from home PCs to servers, workstations, and eventually mobile devices.

The Impact of Microsoft and the Rise of the “Wintel” Era

As the personal computer market grew, so too did the software ecosystem that accompanied it. A critical factor in the rise of personal computing was the growth of software that could take advantage of the new hardware. IBM’s decision to use Intel’s microprocessors was paired with a partnership with Microsoft, which provided the operating system—MS-DOS—for the IBM PC. The combination of Intel’s powerful microprocessors and Microsoft’s software created a powerful platform that allowed personal computers to thrive.

This combination of Intel hardware and Microsoft software became so dominant that it was referred to as the “Wintel” platform. The success of the Wintel platform helped establish Intel as the undisputed leader in the personal computing space. The compatibility between Intel chips and Microsoft’s operating systems made it easy for other companies to build PCs, further accelerating the growth of the personal computer market.

The Wintel alliance was instrumental in shaping the landscape of personal computing throughout the 1980s and 1990s. As Microsoft continued to refine its Windows operating system and Intel released more powerful microprocessors, personal computers became faster, more reliable, and more user-friendly. This, in turn, helped expand the market for PCs to consumers and businesses alike, fundamentally changing how people lived and worked.

Expanding into the Consumer Market

As the personal computing revolution continued, Intel began to focus more directly on the consumer market. In the early 1990s, Intel introduced the Pentium processor, which represented a significant leap forward in performance, multimedia capabilities, and power efficiency. The Pentium processor became synonymous with fast, powerful personal computing, and its success helped Intel solidify its dominance in the market. The Pentium chip was a major milestone, with a performance level that made it suitable for everything from word processing to video games, digital graphics, and even early internet applications.

With the Pentium and later generations of processors, Intel also helped push forward other technological innovations, such as the development of faster memory, integrated graphics, and better multimedia processing. As a result, personal computers equipped with Intel chips became more capable of handling the increasingly demanding tasks associated with multimedia, internet browsing, and business applications.

Intel’s Competitive Advantage and Challenges

As the personal computing market expanded, so did competition. Companies like AMD, Cyrix, and VIA began producing microprocessors that directly competed with Intel’s chips. While Intel maintained a dominant market share, it faced pressure from these competitors to innovate faster and produce more cost-effective solutions. The company responded with its aggressive research and development initiatives, constantly releasing new generations of microprocessors that offered better performance, lower power consumption, and new features.

Intel’s competitive advantage, however, was rooted not only in its technological innovations but also in its ability to maintain a reliable and efficient manufacturing process. Intel was one of the few companies that could produce microprocessors in massive quantities, ensuring a steady supply of chips for the growing personal computer market. This, combined with its strong brand recognition and dominance in the market, helped Intel continue to lead the personal computing industry throughout the 1990s and into the 21st century.

The Ongoing Influence of Intel in Personal Computing

Intel’s role in the rise of personal computing cannot be overstated. The company’s microprocessors were the driving force behind the proliferation of personal computers, and its continued innovation helped shape the technology landscape in the decades that followed. Today, Intel’s chips power a wide range of devices, from laptops and desktops to smartphones, tablets, and data centers. The company’s contributions to the personal computing revolution have left an indelible mark on the modern world, and its microprocessors remain at the heart of countless innovations.

Intel’s leadership in personal computing paved the way for the digital age, a world in which computers are integrated into every aspect of daily life. The personal computing revolution, fueled by Intel’s processors, transformed the global economy, enabling new industries, fostering innovation, and changing how we communicate, work, and learn.

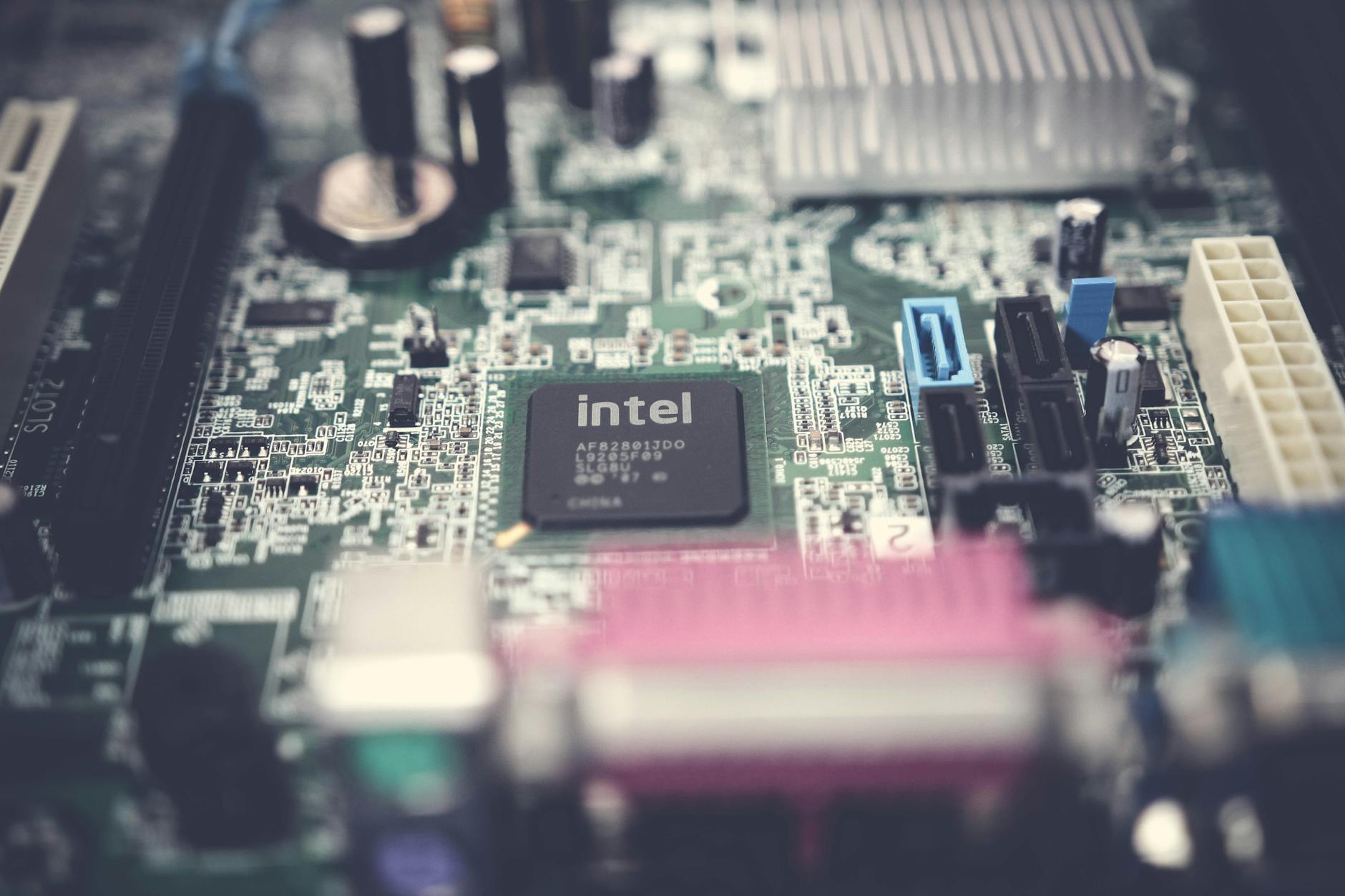

Photo by Filip Hajdóci on Pexels.com

Intel’s Strategic Innovation and Technological Leadership in the 1990s and 2000s

As the personal computing market matured, Intel faced a new set of challenges and opportunities in the 1990s and 2000s. The company’s dominance in the microprocessor industry was increasingly threatened by fierce competition, particularly from Advanced Micro Devices (AMD), and the rapid pace of technological advancements. In response, Intel focused its efforts on continuous innovation and adaptation to stay ahead of the curve. This period marked a time of technological breakthroughs, key product launches, and strategic shifts that would solidify Intel’s position as an industry leader in the evolving digital landscape.

The Pentium Era: Revolutionizing Computing Power

In the early 1990s, Intel introduced the Pentium processor, a revolutionary product that changed the trajectory of personal computing. Building on the success of the 486 microprocessor, the Pentium featured a superscalar architecture, which allowed the processor to execute more than one instruction per clock cycle. This architectural improvement delivered a significant performance boost, making the Pentium processor a go-to choice for both business and home computing applications.

The Pentium was also designed to handle multimedia applications, which were becoming increasingly important in the early stages of the multimedia revolution. It included support for floating-point calculations, a critical feature for tasks like video editing, 3D graphics rendering, and scientific computations. The processor’s ability to deliver better performance for such tasks made it a highly sought-after product for emerging industries, including gaming, multimedia, and design.

One of the most significant moments in the Pentium era came with the release of the Pentium Pro in 1995, which was designed to handle enterprise-level applications such as database management and server tasks. While not a direct consumer product, the Pentium Pro solidified Intel’s position in the high-performance computing market, further diversifying its product offerings and ensuring its role in both personal and professional computing environments.

The Shift to the Internet Age: Coping with Changing Market Demands

In the late 1990s and early 2000s, the technology landscape underwent a fundamental shift with the rise of the internet and the growing importance of networking, digital media, and e-commerce. As businesses and consumers increasingly relied on the internet for work, communication, and entertainment, the demand for faster, more efficient processors escalated.

Intel responded to this new era of digital transformation with a focus on developing microprocessors that would meet the growing demands of the internet age. The introduction of Intel’s Celeron line of processors in 1998 catered to budget-conscious consumers, offering a cost-effective solution for home users and small businesses. Meanwhile, the company’s Xeon processor line, released in the early 2000s, focused on high-performance computing for enterprise-level applications, such as web hosting, data centers, and large-scale business operations.

The internet revolution, however, was not without challenges. Intel, despite its technological leadership, faced competition from companies like AMD, which gained ground with its Athlon processors in the early 2000s. The competition spurred Intel to accelerate its innovation efforts, pushing the company to introduce new product lines, such as the Pentium III and Pentium 4, which were designed to meet the evolving demands of the rapidly expanding internet economy.

Intel’s 45nm and 32nm Technologies: Leading the Charge in Semiconductor Manufacturing

One of Intel’s most significant achievements during this time was its leadership in advancing semiconductor manufacturing technology. Throughout the 2000s, Intel continually pushed the boundaries of chip manufacturing, leading the industry with its innovations in process technology.

In 2007, Intel launched its 45nm (nanometer) manufacturing process, which was a significant advancement in terms of power efficiency, speed, and overall performance. This move allowed Intel to pack more transistors into each chip while reducing the power consumption, helping to meet the growing demand for mobile devices and energy-efficient computing.

Intel’s leadership in semiconductor manufacturing continued in the following years, with the company’s introduction of the 32nm process in 2009. These advancements not only boosted the performance of Intel’s processors but also provided a competitive edge over rivals like AMD, who struggled to keep pace with Intel’s rapid technological advancements in manufacturing.

The Shift to Multicore Processors: Meeting the Demands of Modern Computing

As the need for more powerful processors continued to grow in the early 2000s, Intel shifted its focus toward multicore technology. Rather than simply increasing the clock speed of individual processors, Intel began to introduce processors with multiple cores, allowing for greater parallel processing and improved performance in multitasking environments.

Intel’s Core processors, introduced in 2006, marked the company’s transition to multicore technology. The Core 2 Duo, with two processing cores, was particularly notable for its ability to provide faster performance with lower power consumption, setting the stage for Intel’s long-term strategy of building energy-efficient, high-performance processors.

Intel continued to improve its multicore technology, introducing chips with four, six, and even eight cores. These advances not only benefited personal computing but also helped power servers, workstations, and high-performance computing systems, driving growth in fields such as cloud computing, artificial intelligence, and scientific research.

Entering the Mobile Market: A Strategic Challenge

While Intel continued to dominate the personal computing and server markets, it faced increasing pressure from the rapidly growing mobile device sector in the 2000s. The rise of smartphones, powered by ARM-based processors from companies like Qualcomm, created new challenges for Intel’s traditional x86 architecture. Intel’s initial forays into the mobile space, including its Atom processors, were met with limited success, and the company faced difficulties in establishing a foothold in the smartphone and tablet markets.

Despite these setbacks, Intel remained committed to expanding its presence in mobile computing. In 2011, the company unveiled its Atom Z-series processors for mobile devices, but these products failed to compete effectively with ARM-based processors, which offered better power efficiency and integration with mobile operating systems like iOS and Android.

However, Intel’s foray into the mobile market was not a total failure. It allowed the company to gain valuable experience and insight into the unique challenges of mobile computing, which would later inform its strategy in emerging technologies like 5G and Internet of Things (IoT) devices. In 2018, Intel began shifting its focus to data centers, artificial intelligence, and autonomous systems, where it saw long-term growth potential.

Innovation and Sustainability: Intel’s Investment in Future Technologies

As the company moved into the 2010s, Intel’s innovation efforts expanded beyond just microprocessors. The company invested heavily in emerging technologies, including artificial intelligence (AI), machine learning, and autonomous systems. Intel’s acquisition of companies like Altera and Mobileye signaled its intent to lead in these growing fields, with a focus on developing hardware and software solutions for the next generation of intelligent systems.

Intel also continued to drive progress in sustainability and energy-efficient computing. The company made significant strides toward reducing its carbon footprint and developing green computing technologies. Intel’s focus on sustainability would become an integral part of its broader strategic direction, as the company sought to maintain its leadership in the semiconductor industry while also addressing global environmental concerns.

Intel’s Role in Shaping the Future of Data Centers and Cloud Computing

As the internet continued to expand, the demand for data storage, processing, and transmission grew exponentially. This shift toward cloud computing and large-scale data centers marked a new phase in Intel’s strategic evolution. The company’s high-performance processors became the backbone of modern data infrastructure, playing a crucial role in enabling businesses, governments, and consumers to harness the power of cloud services, big data, and artificial intelligence. Intel’s investment in data centers and cloud computing would not only shape the future of enterprise IT but also redefine how information was processed and shared across the globe.

The Growth of Cloud Computing and Data Centers

In the 2010s, the global shift to cloud computing fundamentally altered the way businesses approached IT infrastructure. Traditional, on-premise servers were increasingly replaced by scalable, on-demand services provided by cloud providers such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud. These cloud services allowed companies to rent computing power, storage, and software over the internet, eliminating the need for extensive in-house data centers and hardware.

Intel’s microprocessors became the critical enabler of this cloud revolution. Data centers, which form the backbone of cloud computing, required immense computing power to handle vast amounts of data processing, storage, and retrieval. Intel responded to this demand by developing processors specifically optimized for data centers, including its Xeon line of processors, which became the dominant platform for cloud providers and enterprise data centers alike.

Xeon processors, with their high core counts, enhanced memory bandwidth, and support for advanced virtualization technologies, were ideal for data center environments where high performance, scalability, and reliability were essential. These processors powered everything from web servers to enterprise applications, helping companies manage the growing complexity of cloud infrastructure. As businesses migrated to the cloud, Intel played a critical role in providing the hardware that enabled this transformation.

Intel’s Partnership with Major Cloud Providers

Intel’s strong position in the data center market was also bolstered by strategic partnerships with the leading cloud providers. Amazon, Microsoft, Google, and other cloud giants all relied heavily on Intel’s Xeon processors to power their data centers and deliver cloud-based services to customers. Intel’s collaboration with these companies extended beyond just supplying processors; it also involved joint efforts in developing new hardware and software solutions to optimize performance, energy efficiency, and cost-effectiveness in the cloud environment.

For example, Intel worked with cloud providers to enhance the performance of machine learning workloads, which became increasingly important as cloud services expanded into the realm of artificial intelligence and big data analytics. Xeon processors were designed to handle the massive parallel processing demands of AI and machine learning, enabling cloud providers to offer services that could process vast amounts of data in real time. This collaboration helped establish Intel as the key player in the rapidly growing AI and big data markets, as well as further solidified the company’s position at the center of the cloud computing revolution.

Intel’s partnerships with major cloud providers also extended to the development of specialized hardware to optimize cloud computing workloads. For instance, Intel introduced its Optane memory technology, which provided a high-speed memory solution for data centers and cloud servers. Optane memory, based on Intel’s 3D XPoint technology, offered significantly faster access to data compared to traditional storage systems, allowing cloud providers to offer lower-latency, high-performance services for demanding applications such as real-time analytics and AI.

The Role of Intel in Advancing Virtualization

Virtualization technology, which allows multiple virtual instances of servers to run on a single physical machine, was another key area in which Intel played a significant role in the data center and cloud computing boom. Virtualization enabled businesses to maximize their server utilization and reduce costs by consolidating workloads onto fewer physical machines. As virtualization became a cornerstone of cloud computing and data centers, Intel developed processors with built-in support for virtualization technologies, such as Intel VT-x (Intel Virtualization Technology).

Intel’s VT-x technology allowed data centers to run multiple virtual machines on a single physical server, improving efficiency and resource utilization. The company also developed hardware support for Intel Virtualization Technology for Directed I/O (VT-d), which improved I/O performance and security in virtualized environments. These innovations helped Intel maintain its position as the dominant supplier of processors for data centers, as virtualization became integral to the operation of cloud services and large-scale enterprise IT systems.

Intel’s Innovations in Data Center Storage and Memory

Intel’s contributions to the cloud and data center market went beyond processors. The company also made significant advances in storage and memory technologies, which are critical components of any data center infrastructure. Intel’s solid-state drive (SSD) technology, for example, became a key enabler for high-performance data storage in data centers and cloud environments. SSDs provided faster data access speeds and lower latency compared to traditional hard disk drives (HDDs), allowing data centers to process information more quickly and efficiently.

Intel’s Optane technology also played a major role in revolutionizing data center storage. Optane, which combined high-speed memory with traditional storage solutions, allowed data centers to accelerate workloads and improve performance in high-demand applications, such as artificial intelligence, big data analytics, and high-performance computing. This technology provided cloud providers with the ability to meet the demands of modern computing workloads, which were becoming increasingly data-intensive and requiring fast data retrieval.

In addition to storage innovations, Intel also focused on enhancing memory systems for data centers. The company introduced innovations like Intel Memory Drive Technology, which allowed data to be stored in memory rather than on disk storage, further speeding up data processing. These advancements allowed Intel to provide complete solutions for data centers, combining high-performance processors, storage, and memory technologies to meet the growing demands of cloud computing, AI, and big data.

Intel’s Focus on Energy Efficiency and Sustainability

As data centers continued to grow in size and complexity, energy efficiency became a crucial factor in their design and operation. The sheer scale of global data centers, which require vast amounts of electricity to run and cool, made energy consumption and environmental impact key concerns for cloud providers and enterprises alike. Intel responded to these concerns by developing energy-efficient processors and technologies designed to reduce the environmental impact of data centers.

Intel’s processors, such as the Xeon Scalable series, were optimized for energy efficiency, delivering high performance while consuming less power. This was particularly important for cloud providers and large-scale data centers, where energy costs represent a significant portion of operational expenses. Additionally, Intel invested in sustainable manufacturing practices, aiming to reduce the carbon footprint of its production processes. These efforts helped Intel meet the growing demand for sustainable technologies and allowed the company to remain competitive in a market that increasingly prioritized environmental responsibility.

The Future of Intel in Cloud and Data Center Computing

Looking ahead, Intel is well-positioned to continue its leadership in the data center and cloud computing markets. The company’s ongoing investments in artificial intelligence, machine learning, and advanced memory and storage technologies ensure that it will remain a key player in the rapidly evolving cloud computing landscape. Intel’s commitment to innovation in the data center space will also be critical as the industry moves toward the next frontier of computing, including 5G, edge computing, and the Internet of Things (IoT).

As businesses and consumers increasingly rely on cloud-based services and AI-driven applications, Intel’s processors will continue to power the infrastructure that makes these technologies possible. With its vast portfolio of technologies designed to meet the demands of modern data workloads, Intel will remain at the forefront of the cloud and data center revolution, shaping the future of computing for years to come.

Intel’s Advances in Artificial Intelligence and Machine Learning

As artificial intelligence (AI) and machine learning (ML) technologies gained prominence in the 2010s, Intel positioned itself at the forefront of the AI revolution, leveraging its long history of microprocessor development to fuel the next generation of intelligent systems. AI and ML are transforming industries such as healthcare, finance, autonomous vehicles, and robotics, creating unprecedented opportunities for companies to leverage data for decision-making and automation. Intel recognized the vast potential of these technologies and shifted its focus to create specialized hardware and software solutions to support the computational needs of AI and ML workloads.

Intel’s Transition to AI and Machine Learning

Intel’s initial involvement in AI was rooted in the computational needs required for traditional AI workloads. Early AI systems were based on rule-based algorithms and logic, but as machine learning gained traction, especially with deep learning models, the need for higher computational power became apparent. Machine learning algorithms, particularly deep neural networks (DNNs), require enormous processing capabilities to handle the vast amounts of data needed to train models and perform predictions. This spurred Intel to innovate and create products designed specifically for these computational challenges.

Intel began by refining its existing portfolio of processors to support AI and machine learning workloads. The company’s Xeon processors, for instance, were enhanced with specialized instruction sets and optimizations to handle the unique demands of ML. These processors played a pivotal role in accelerating AI training and inference tasks within data centers, as deep learning models required significantly more processing power than traditional applications.

However, Intel soon realized that in order to remain competitive in the rapidly evolving AI market, it would need to develop new hardware specifically designed for AI acceleration. This led to the creation of specialized products such as Intel’s Nervana Neural Network Processor (NNP) and the acquisition of companies like Altera and Mobileye, which would further strengthen Intel’s capabilities in AI and autonomous driving technologies.

Nervana Neural Network Processor (NNP)

In 2016, Intel made a bold move into the AI hardware space with the introduction of the Nervana Neural Network Processor (NNP). Nervana was designed to accelerate deep learning workloads, which involve the training of neural networks using large datasets. Traditional CPUs were not designed to efficiently process the parallel computations required by deep learning models, so specialized hardware was needed. Nervana’s architecture was tailored to the specific needs of machine learning workloads, offering significant improvements in speed and efficiency compared to traditional processors.

The Nervana NNP, introduced as both a training and inference chip, was engineered to handle large-scale neural networks and the massive amount of data required for training AI models. The chip featured a highly parallel design with a focus on maximizing memory throughput and minimizing data transfer bottlenecks, key components for improving deep learning efficiency. While the Nervana NNP was a significant step forward, Intel later shifted its focus to more general-purpose AI accelerators, such as the Intel Habana Gaudi AI processors, which offered even greater flexibility and performance for modern AI workloads.

The Role of Intel Habana Gaudi in AI and ML

In 2019, Intel acquired Habana Labs, an Israeli AI startup known for its cutting-edge AI processors. Habana’s portfolio of products, including the Gaudi AI processor, was designed to provide high-performance, scalable solutions for training deep learning models. The Gaudi processor was optimized for a wide range of AI workloads, including both training and inference, and featured a custom architecture that was designed to provide high throughput and low latency.

Intel’s acquisition of Habana Labs significantly bolstered the company’s AI capabilities, positioning it as a strong competitor in the AI accelerator market. The Gaudi processor was designed to compete with offerings from companies like Nvidia, which had long been the dominant player in the AI hardware space with its GPUs. The Gaudi processor, with its ability to scale effectively across large data centers, became a critical part of Intel’s AI strategy, offering high performance at a more cost-effective price point compared to GPUs.

The Gaudi processor integrated seamlessly into Intel’s existing Xeon-based data center infrastructure, providing customers with a unified architecture for both general computing and AI workloads. This made Intel’s AI solutions attractive to enterprises already relying on Xeon processors for their data center operations, allowing them to easily incorporate AI workloads into their existing infrastructure without the need for separate hardware.

Intel’s Focus on Edge AI and 5G

In addition to cloud-based AI, Intel recognized the growing importance of AI at the edge, where devices such as autonomous vehicles, industrial robots, and smart cameras perform AI tasks locally rather than relying on a centralized cloud service. Edge AI involves processing data directly on devices with limited computing resources, making it essential to design hardware that is optimized for energy efficiency and real-time performance.

Intel’s strategy for edge AI included the development of a new class of processors, including the Intel Movidius Myriad X Vision Processing Unit (VPU) and the Intel Agilex FPGAs (Field Programmable Gate Arrays). These processors were designed to handle AI inference tasks at the edge, providing fast, low-latency processing for applications such as autonomous driving, computer vision, and industrial automation.

Intel also saw the convergence of AI and 5G as a major growth area. The deployment of 5G networks, which promise significantly higher speeds and lower latencies, creates new opportunities for AI-powered applications in industries such as healthcare, manufacturing, and entertainment. Intel has been actively involved in developing 5G infrastructure, and its AI hardware solutions are expected to play a central role in enabling real-time, data-intensive applications that rely on both AI and 5G technologies.

AI Software and Ecosystem Development

Beyond hardware, Intel recognized that the success of AI technologies relied not just on processing power but also on the software ecosystem that supported them. Intel invested heavily in AI software frameworks, tools, and libraries, partnering with companies and research institutions to optimize its hardware for the latest AI algorithms. Intel’s AI software solutions, including its OpenVINO toolkit, provided developers with tools to optimize their AI models for Intel hardware, ensuring that they could take full advantage of the performance gains offered by Intel’s processors and accelerators.

Intel also focused on creating an open ecosystem for AI development, ensuring that its hardware could support a wide range of AI frameworks, such as TensorFlow, PyTorch, and Apache MXNet. This open approach allowed Intel to cater to a broad set of developers, researchers, and enterprises, helping to foster innovation and accelerate the adoption of AI technologies.

Future Prospects for Intel in AI

As AI continues to evolve and reshape industries, Intel is well-positioned to capitalize on the growing demand for AI computing power. The company’s continued investment in AI hardware, software, and ecosystem development will ensure that it remains a key player in this space. Moreover, Intel’s expertise in semiconductor manufacturing, combined with its growing portfolio of AI-specific processors, gives the company a competitive edge in an increasingly crowded AI market.

Looking to the future, Intel is likely to continue expanding its AI capabilities, focusing on areas such as neuromorphic computing, quantum computing, and the integration of AI with other emerging technologies like 5G and edge computing. As AI becomes more ubiquitous in society, Intel’s hardware and software solutions will play a crucial role in driving the next wave of technological innovation.

Intel’s Impact on Global Semiconductor Industry and Market Trends

Intel’s influence on the semiconductor industry is undeniable, as the company has shaped not only the hardware landscape but also the very structure of the global market. Through its innovations, market strategies, and leadership in microprocessor technology, Intel has maintained a dominant position for decades. However, its influence extends beyond the boundaries of its own product offerings, as it has contributed to broader industry trends, standards, and economic shifts that continue to reverberate today.

Intel’s Market Leadership and the Rise of the Personal Computer

Intel’s early contributions to the semiconductor market were foundational in the growth of the personal computer (PC) industry. The introduction of the Intel 4004 microprocessor in 1971 marked the beginning of the company’s dominance in the field of microelectronics. It was the world’s first commercially available microprocessor, setting the stage for the explosion of personal computing in the 1970s and 1980s. By developing processors that could execute general-purpose computing tasks, Intel revolutionized the way people interacted with technology, powering an entire generation of home and business PCs.

Intel’s leadership continued with the introduction of the x86 architecture, which quickly became the industry standard for personal computing. The x86 architecture offered a platform for compatibility across a wide range of devices, from desktops and laptops to servers and embedded systems. The success of Intel’s microprocessors, especially in the consumer PC market, established the company as a leader in semiconductor manufacturing and positioned it as a key player in the global economy.

The Foundry Model and Semiconductor Manufacturing Leadership

Intel’s market influence was not limited to just designing processors. The company has long been a pioneer in semiconductor manufacturing, creating some of the most advanced manufacturing processes in the world. For much of its history, Intel controlled every aspect of its production, from designing the chips to manufacturing them in-house at its own foundries. This vertical integration allowed Intel to maintain strict quality control, accelerate product development cycles, and maintain a competitive edge in the race to deliver cutting-edge microprocessors.

Intel’s focus on refining manufacturing techniques, including its development of Moore’s Law, allowed the company to push the boundaries of chip performance. By continually shrinking transistor sizes and increasing the number of transistors on each chip, Intel was able to deliver increasingly powerful processors while lowering costs. This process of miniaturization, known as “scaling,†became a hallmark of Intel’s dominance in the semiconductor industry, and it led to a series of breakthroughs in computing power.

However, Intel’s strategy began to face challenges in the 2010s. While it was initially able to keep up with the industry’s demand for smaller, more powerful chips, delays in its 10nm process and difficulty scaling its manufacturing technologies to keep pace with competitors like Taiwan Semiconductor Manufacturing Company (TSMC) became increasingly apparent. As a result, Intel was forced to change its strategy, embracing the idea of partnering with third-party foundries for the first time.

Emergence of Competition and the Foundry Shift

The shift toward outsourcing manufacturing became necessary as Intel faced increased competition from companies like AMD and ARM, which were gaining traction with their own advanced semiconductor technologies. Advanced Micro Devices (AMD), Intel’s primary rival in the CPU market, began to make substantial inroads in the high-performance computing space with its Ryzen and EPYC processors. The rise of ARM-based processors, which offered better energy efficiency, also disrupted Intel’s dominance in mobile devices and IoT (Internet of Things) systems.

Intel’s response to these competitive pressures was multifaceted. The company invested heavily in research and development (R&D) to improve its own manufacturing processes, but it also began to explore new partnerships and collaborations with third-party foundries, such as TSMC. This shift marked a significant change in Intel’s business model, which had long been centered on in-house production. By outsourcing some of its manufacturing needs to external foundries, Intel hoped to alleviate capacity constraints and improve its competitiveness in the face of increasing pressure from rivals.

The foundry shift also reflected a broader trend in the semiconductor industry, where companies like TSMC and Samsung began to take on an increasingly important role in global chip production. As Intel outsourced more of its manufacturing, TSMC became the leading global foundry, supplying chips to tech giants such as Apple, Nvidia, and Qualcomm. The growing importance of third-party foundries has reshaped the semiconductor landscape, creating new dynamics of competition and collaboration.

Shifting Global Semiconductor Supply Chain Dynamics

Intel’s decision to embrace the foundry model also reflects the changing dynamics of the global semiconductor supply chain. For decades, the semiconductor industry was concentrated in a few key regions, particularly in the United States, Japan, and South Korea. However, as demand for chips surged due to the proliferation of smartphones, data centers, and other digital devices, the global supply chain became increasingly complex and geographically distributed.

The rise of China as both a major consumer and manufacturer of semiconductor components has had a significant impact on global supply chain trends. Intel, along with other leading chipmakers, had to navigate the geopolitical tensions surrounding China’s ambitions to become a global leader in semiconductor manufacturing. While Intel remains one of the largest producers of semiconductors, it has also faced increased competition from Chinese companies like SMIC (Semiconductor Manufacturing International Corporation), which has been ramping up its production of advanced chips. Intel’s ability to maintain its market position in this new environment will depend not only on its technological innovations but also on its ability to navigate the shifting global trade landscape.

The Role of Intel in Semiconductor Industry Standards

Throughout its history, Intel has played a key role in shaping the standards that govern the semiconductor industry. From the early days of the x86 architecture to its leadership in the development of microprocessor performance benchmarks, Intel has been instrumental in defining the parameters by which chip performance is measured. Its influence in setting industry standards extends beyond just hardware, as the company has also contributed to software development and the creation of industry-wide initiatives.

For example, Intel’s Hyper-Threading technology, which enables a single processor core to handle multiple threads of execution, became a widely adopted standard in the computing industry. Similarly, Intel’s work on integrating integrated graphics into its processors helped to establish a new norm for energy-efficient computing in mobile devices.

Intel’s leadership in these areas has had lasting effects on the broader semiconductor ecosystem, pushing the entire industry to innovate and compete at higher levels of efficiency, performance, and scalability. The company’s continued contributions to emerging technologies, such as quantum computing, will likely have a profound impact on the future direction of the industry.

Conclusion: The Enduring Influence of Intel on the Semiconductor Market

Intel’s impact on the global semiconductor market has been immense and enduring. From its pioneering work in microprocessor technology to its innovations in semiconductor manufacturing and its role in defining industry standards, Intel has shaped the trajectory of the semiconductor industry for decades. Despite recent challenges from competitors and shifts in the global supply chain, Intel remains one of the most influential players in the semiconductor space, with its hardware and software innovations continuing to define the future of computing.

The company’s pivot to the foundry model and its ongoing investments in AI, machine learning, and other cutting-edge technologies signal that Intel is poised to remain a dominant force in the semiconductor market. As the world increasingly depends on semiconductors to power everything from mobile phones to autonomous vehicles and data centers, Intel’s contributions will undoubtedly continue to shape the future of the global economy and technological innovation for years to come.

Conclusion

Intel’s journey from a small startup to one of the most influential companies in the semiconductor industry is a testament to its ability to innovate, adapt, and lead in an ever-changing technological landscape. Through groundbreaking advancements in microprocessor design, manufacturing, and industry standards, Intel has shaped not only the semiconductor market but also the entire technology ecosystem that powers modern life. The company’s pivotal role in the personal computer revolution, its contributions to mobile and cloud computing, and its recent shifts in strategy to address new challenges highlight its enduring influence.

Intel’s leadership in semiconductor innovation has helped the world move from bulky, primitive computers to the powerful, energy-efficient devices that fuel today’s digital world. The company’s products continue to be at the heart of everything from smartphones and data centers to gaming and AI applications, impacting industries far beyond computing. Yet, despite its remarkable achievements, Intel’s path has not been without challenges. Increased competition, technological setbacks, and shifting geopolitical landscapes have forced the company to evolve its business model.

Looking ahead, Intel’s future remains promising. By embracing the foundry model, investing in new technologies like artificial intelligence and quantum computing, and committing to sustainable manufacturing practices, the company is positioning itself to remain a leader in the global semiconductor market. Its ongoing commitment to innovation will undoubtedly continue to drive advancements in technology, ensuring its place at the forefront of the semiconductor industry for years to come. Intel’s legacy, which has already shaped the past several decades, will likely play a central role in shaping the next generation of technological breakthroughs, further cementing its role as a cornerstone of the modern digital economy.